By Jen Maravegias | Miscellaneous | October 12, 2021

If you’re not an 80-year-old shut-in you might have missed this past weekend’s 60 Minutes segment about Synthetic Media AKA “Deep Fakes.”

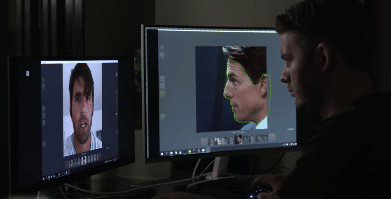

We’ve been talking about Deep Fakes for a few years here at Pajiba. We may be a little obsessed with them. And by “we” I mean Petr. Most recently, over the summer, you may remember the controversy surrounding Morgan Neville’s use of Deep Fake audio technology to create voice-over of Anthony Bourdain for his documentary. But we also talked about Harrison Ford being Deep Faked into Solo, Pete Davidson’s face being Deep Faked onto Ariana Grande back when they were A Thing. Oh and this isn’t even the first time we’re talking about Deep Fake Tom Cruise!

This two-year-old video of a Deep Faked Keanu Reeves is a good way to see how far the technology has come in a short amount of time. And this one, of Elon Musk’s face superimposed into Miley Cyrus’s ‘Wrecking Ball’ video, is a good example of a “benevolent” yet super disturbing use of the technology.

Ben Sasse was ringing the bell about the dangers of all this back in 2018 in the Senate where he introduced the Malicious Deep Fake Prohibition Act.

“Deepfakes—seemingly authentic video or audio recordings that can spread like wildfire online—are likely to send American politics into a tailspin, and Washington isn’t paying nearly enough attention to the very real danger that’s right around the corner.” https://t.co/PViC6Y5YNm

— Ben Sasse (@BenSasse) October 19, 2018

So I guess 60 Minutes decided it was time to bring The Boomers up to speed.

“We believed as long as we're making clear this is a parody, we're not doing anything to harm his image,” says visual effects artist Chris Umé, the creator of the hyper-realistic Tom Cruise deepfakes. https://t.co/PSLAaF2z2f pic.twitter.com/3XwUlikeCL

— 60 Minutes (@60Minutes) October 10, 2021

I’m going to go on the record right now as being fully against this deeply creepy business. As political scientist and author, Nina Schick, talked about in that 60 Minutes interview, all of this ha ha super funsies, let’s make it look like celebrities are saying wacky things bologna is really the beginning of what is likely to be the next Big Mess on the internet. We’ve already seen it deployed against female celebrities who have had their likenesses Deep Faked into porn videos.

Show of hands, who is surprised by that … I’ll wait … Yeah, that’s what I thought.

Although none of the experts believe it has yet been used to influence political elections here or abroad, it’s only a matter of time before we’re going to have to question every video posted of anyone saying anything. That’s not a good thing. This country is already suffering from an overabundance of citizens who refuse to believe what they see and hear as truth. Once we start throwing Deep Fakes into the mix, there will just be more reason to doubt everything.

Over on Twitter, Vincent D’Onofrio made some good and interesting points about this from an actor’s point of view. Who is protecting actors from having their likenesses stolen from them? What’s preventing anyone from creating a Deep Fake of an actor to use in a project instead of paying the actor themselves for their participation?

This is a perfect example of why my union sucks. We are so behind the time's.

— Vincent D'Onofrio (@vincentdonofrio) October 11, 2021

This should not be something anyone can just do just because they have the ability to do it.

There should be a process set in place where the person who's face they are replicating should be involved. https://t.co/KULDi88hzW

According to the 60 Minutes interview, Synthesia, a London-based company, has already used Deep Fake technology in an ad campaign featuring Snoop Dogg. European food delivery company, Just Eat, shot an expensive commercial with the rapper/actor/weed aficionado and didn’t want to spend more money shooting a second version for their Australian subsidiary, Menulog. Synthesia’s technicians took the original video and created a new version where, instead of saying “Just Eat,” Snoop’s mouth says “Menulog.” I’d like to see the contract for that shoot. Usually, there are hourly rates paid for commercial work and then rates paid for “versions” of the same spot. Is this considered a “version” of the original commercial? Is it handled the same way editing a 60-second spot down to a 30 would be, contractually? So many questions and, as usual where technology is involved, so few answers and so few regulations.

Chris Umé, the creator of the Tom Cruise Deep Fake TikTok videos, says he’s just in it to entertain and raise awareness of the technology. He doesn’t think there’s anything wrong with what he’s doing, as long as he makes it clear that it’s a parody. That’s a slippery slope because once technology is out in the open, anyone can use it for anything. Umé’s videos are getting millions of views and lots of media attention. What happens next isn’t really up to him, or to Tom Cruise. If we’re at the mercy of the internet to maintain a moral compass around this technology, we’re all screwed.